agi.score

Cross-benchmark aggregate rankings for frontier LLMs. Multiple sources averaged element-wise into one comparable score.

Combined ranking

| # | Model | Org | Aggregate | Sources |

|---|---|---|---|---|

| 1 | GPT-5.5 Thinking xHigh Effort | OpenAI | 1.000 | LiveBench (80.71) |

| 2 | GPT-5.4 Thinking xHigh Effort | OpenAI | 0.924 | both (MiMo 0.856 / LB 0.991) |

| 3 | Claude 4.7 Opus Thinking xHigh Effort | Anthropic | 0.921 | LiveBench (76.91) |

| 4 | GPT-5.5 Thinking High Effort | OpenAI | 0.907 | LiveBench (76.24) |

| 5 | Claude 4.5 Opus Thinking High Effort | Anthropic | 0.902 | LiveBench (75.96) |

| 6 | Claude 4.6 Sonnet Thinking Medium Effort | Anthropic | 0.892 | LiveBench (75.47) |

| 7 | Claude 4.6 Sonnet Thinking High Effort | Anthropic | 0.888 | LiveBench (75.32) |

| 8 | GPT-5.4 Thinking High Effort | OpenAI | 0.883 | LiveBench (75.07) |

| 9 | Claude 4.7 Opus Thinking High Effort | Anthropic | 0.880 | LiveBench (74.89) |

| 10 | GPT-5.2 High | OpenAI | 0.879 | LiveBench (74.84) |

| 11 | GPT-5.2 Codex | OpenAI | 0.867 | LiveBench (74.30) |

| 12 | Claude 4.6 Opus Thinking High Effort | Anthropic | 0.866 | both (MiMo 0.822 / LB 0.909) |

| 13 | GPT-5.1 Codex Max High | OpenAI | 0.861 | LiveBench (73.98) |

| 14 | Claude 4.5 Opus Thinking Medium Effort | Anthropic | 0.857 | LiveBench (73.78) |

| 15 | Gemini 3 Pro Preview High | 0.849 | LiveBench (73.39) | |

| 16 | GPT-5.3 Codex High | OpenAI | 0.835 | LiveBench (72.76) |

| 17 | Gemini 3 Flash Preview High | 0.828 | LiveBench (72.40) | |

| 18 | Claude 4.7 Opus Thinking Medium Effort | Anthropic | 0.826 | LiveBench (72.31) |

| 19 | GPT-5.1 High | OpenAI | 0.821 | LiveBench (72.04) |

| 20 | GPT-5.1 Codex Max | OpenAI | 0.819 | LiveBench (71.95) |

| 21 | GPT-5.2 Medium | OpenAI | 0.816 | LiveBench (71.84) |

| 22 | GPT-5.3 Codex xHigh | OpenAI | 0.812 | LiveBench (71.64) |

| 23 | Qwen 3.6 Plus | Alibaba | 0.796 | LiveBench (70.85) |

| 24 | GPT-5 Pro | OpenAI | 0.788 | LiveBench (70.48) |

| 25 | Claude 4.6 Sonnet Thinking Low Effort | Anthropic | 0.787 | LiveBench (70.44) |

| 26 | GPT-5.4 Nano xHigh | OpenAI | 0.781 | LiveBench (70.13) |

| 27 | GPT-5.1 Medium | OpenAI | 0.761 | LiveBench (69.17) |

| 28 | Claude 4.7 Opus Thinking Low Effort | Anthropic | 0.760 | LiveBench (69.13) |

| 29 | Kimi K2.5 Thinking | Moonshot AI | 0.759 | LiveBench (69.07) |

| 30 | GLM 5 | Z.AI | 0.755 | LiveBench (68.85) |

| 31 | GPT-5.5 Thinking Medium Effort | OpenAI | 0.751 | LiveBench (68.66) |

| 32 | Kimi K2.6 Thinking | Moonshot AI | 0.750 | both (MiMo 0.677 / LB 0.823) |

| 33 | GPT-5.1 Codex | OpenAI | 0.750 | LiveBench (68.61) |

| 34 | Claude Sonnet 4.5 Thinking | Anthropic | 0.741 | LiveBench (68.19) |

| 35 | Grok 4.20 Beta | xAI | 0.736 | LiveBench (67.96) |

| 36 | GPT-5.4 Mini xHigh | OpenAI | 0.727 | LiveBench (67.54) |

| 37 | GLM 5.1 | Z.AI | 0.725 | both (MiMo 0.667 / LB 0.782) |

| 38 | DeepSeek V4 Flash | DeepSeek | 0.721 | LiveBench (67.25) |

| 39 | Grok 4.3 | xAI | 0.711 | LiveBench (66.74) |

| 40 | DeepSeek V4 Pro | DeepSeek | 0.709 | both (MiMo 0.566 / LB 0.852) |

| 41 | GPT-5 Mini High | OpenAI | 0.694 | LiveBench (65.91) |

| 42 | Claude 4.5 Opus Thinking Low Effort | Anthropic | 0.687 | LiveBench (65.59) |

| 43 | Qwen 3.6 27B | Alibaba | 0.686 | LiveBench (65.56) |

| 44 | GPT-5.2 Low | OpenAI | 0.682 | LiveBench (65.33) |

| 45 | Gemini 3 Pro Preview Low | 0.652 | LiveBench (63.90) | |

| 46 | GPT-5.4 Mini High | OpenAI | 0.645 | LiveBench (63.57) |

| 47 | Minimax M2.7 | Minimax | 0.644 | LiveBench (63.49) |

| 48 | MiMo-V2.5-Pro | — | 0.632 | MiMo only |

| 49 | GPT-5.4 Nano High | OpenAI | 0.628 | LiveBench (62.75) |

| 50 | DeepSeek V3.2 Thinking | DeepSeek | 0.617 | LiveBench (62.20) |

| 51 | Grok 4 | xAI | 0.613 | LiveBench (62.02) |

| 52 | Gemini 3.1 Pro Preview High | 0.611 | both (MiMo 0.239 / LB 0.984) | |

| 53 | Claude 4.1 Opus Thinking | Anthropic | 0.609 | LiveBench (61.81) |

| 54 | Gemini 3.1 Flash Lite Preview High | 0.606 | LiveBench (61.68) | |

| 55 | Gemma 4 31B | 0.605 | LiveBench (61.62) | |

| 56 | Kimi K2 Thinking | Moonshot AI | 0.604 | LiveBench (61.59) |

| 57 | Claude Haiku 4.5 Thinking | Anthropic | 0.599 | LiveBench (61.32) |

| 58 | Claude 4 Sonnet Thinking | Anthropic | 0.598 | LiveBench (61.27) |

| 59 | GPT-5 Mini | OpenAI | 0.592 | LiveBench (61.01) |

| 60 | GPT-5.1 Codex Mini | OpenAI | 0.579 | LiveBench (60.38) |

| 61 | Qwen 3.6 Flash | Alibaba | 0.579 | LiveBench (60.37) |

| 62 | Minimax M2.5 | Minimax | 0.574 | LiveBench (60.14) |

| 63 | GPT-5.3 Instant | OpenAI | 0.571 | LiveBench (59.99) |

| 64 | Grok 4.1 Fast | xAI | 0.571 | LiveBench (59.99) |

| 65 | GPT-5.1 Low | OpenAI | 0.570 | LiveBench (59.95) |

| 66 | Claude 4.5 Opus Medium Effort | Anthropic | 0.553 | LiveBench (59.10) |

| 67 | DeepSeek V3.2 Exp Thinking | DeepSeek | 0.549 | LiveBench (58.90) |

| 68 | Claude 4.5 Opus High Effort | Anthropic | 0.542 | LiveBench (58.59) |

| 69 | GPT-5.4 Nano Medium | OpenAI | 0.540 | LiveBench (58.46) |

| 70 | Gemini 2.5 Pro (Max Thinking) | 0.537 | LiveBench (58.33) | |

| 71 | GPT-5.4 Mini Medium | OpenAI | 0.537 | LiveBench (58.33) |

| 72 | GLM 4.7 | Z.AI | 0.532 | LiveBench (58.09) |

| 73 | Gemini 3 Flash Preview Minimal | 0.496 | LiveBench (56.35) | |

| 74 | Claude 4.5 Opus Low Effort | Anthropic | 0.484 | LiveBench (55.77) |

| 75 | GLM 4.6 | Z.AI | 0.472 | LiveBench (55.19) |

| 76 | Claude 4.1 Opus | Anthropic | 0.457 | LiveBench (54.45) |

| 77 | Claude Sonnet 4.5 | Anthropic | 0.441 | LiveBench (53.69) |

| 78 | Gemini 2.5 Flash (Max Thinking) (2025-09-25) | 0.428 | LiveBench (53.09) | |

| 79 | GPT-5 Mini Low | OpenAI | 0.428 | LiveBench (53.07) |

| 80 | Qwen 3 235B A22B Thinking 2507 | Alibaba | 0.426 | LiveBench (52.97) |

| 81 | DeepSeek V3.2 | DeepSeek | 0.403 | LiveBench (51.84) |

| 82 | Claude 4 Sonnet | Anthropic | 0.385 | LiveBench (50.98) |

| 83 | Qwen 3 Next 80B A3B Thinking | Alibaba | 0.373 | LiveBench (50.41) |

| 84 | DeepSeek V3.2 Exp | DeepSeek | 0.361 | LiveBench (49.85) |

| 85 | GLM 5V Turbo | Z.AI | 0.357 | LiveBench (49.62) |

| 86 | GPT-5.4 Mini Low | OpenAI | 0.355 | LiveBench (49.54) |

| 87 | GPT-5.2 No Thinking | OpenAI | 0.342 | LiveBench (48.91) |

| 88 | Qwen 3 235B A22B Instruct 2507 | Alibaba | 0.340 | LiveBench (48.84) |

| 89 | GPT-5.4 Nano Low | OpenAI | 0.337 | LiveBench (48.67) |

| 90 | GPT-5 Nano High | OpenAI | 0.336 | LiveBench (48.62) |

| 91 | GPT-5 Nano | OpenAI | 0.335 | LiveBench (48.56) |

| 92 | Qwen 3 Next 80B A3B Instruct | Alibaba | 0.330 | LiveBench (48.35) |

| 93 | Kimi K2 Instruct | Moonshot AI | 0.325 | LiveBench (48.10) |

| 94 | MiMo V2 Pro | Xiaomi | 0.321 | both (MiMo 0.110 / LB 0.533) |

| 95 | Gemini 2.5 Flash (Max Thinking) (2025-06-05) | 0.318 | LiveBench (47.74) | |

| 96 | GPT OSS 120b | OpenAI | 0.284 | LiveBench (46.09) |

| 97 | Claude Haiku 4.5 | Anthropic | 0.268 | LiveBench (45.33) |

| 98 | Grok Code Fast | xAI | 0.264 | LiveBench (45.13) |

| 99 | Qwen 3 32B | Alibaba | 0.231 | LiveBench (43.56) |

| 100 | GPT-5.1 No Thinking | OpenAI | 0.212 | LiveBench (42.65) |

| 101 | Gemini 2.5 Flash Lite (Max Thinking) (2025-06-17) | 0.210 | LiveBench (42.56) | |

| 102 | Gemini 2.5 Flash Lite (Max Thinking) (2025-09-25) | 0.207 | LiveBench (42.39) | |

| 103 | Devstral 2 | Mistral | 0.183 | LiveBench (41.24) |

| 104 | GLM 4.6V | Z.AI | 0.159 | LiveBench (40.07) |

| 105 | GPT-5 Mini Minimal | OpenAI | 0.155 | LiveBench (39.90) |

| 106 | Grok 4.20 Beta (Non-Reasoning) | xAI | 0.151 | LiveBench (39.70) |

| 107 | Qwen 3 30B A3B | Alibaba | 0.137 | LiveBench (39.01) |

| 108 | GPT-5.4 Mini | OpenAI | 0.094 | LiveBench (36.95) |

| 109 | Elephant Alpha | OpenRouter | 0.074 | LiveBench (35.97) |

| 110 | GPT-5 Nano Low | OpenAI | 0.040 | LiveBench (34.34) |

| 111 | Grok 4.1 Fast (Non-Reasoning) | xAI | 0.022 | LiveBench (33.45) |

| 112 | Trinity Large Preview | Arcee | 0.007 | LiveBench (32.74) |

| 113 | Nemotron 3 Super 120B A12B | NVIDIA | 0.002 | LiveBench (32.51) |

| 114 | GPT-5.4 Nano | OpenAI | 0.000 | LiveBench (32.39) |

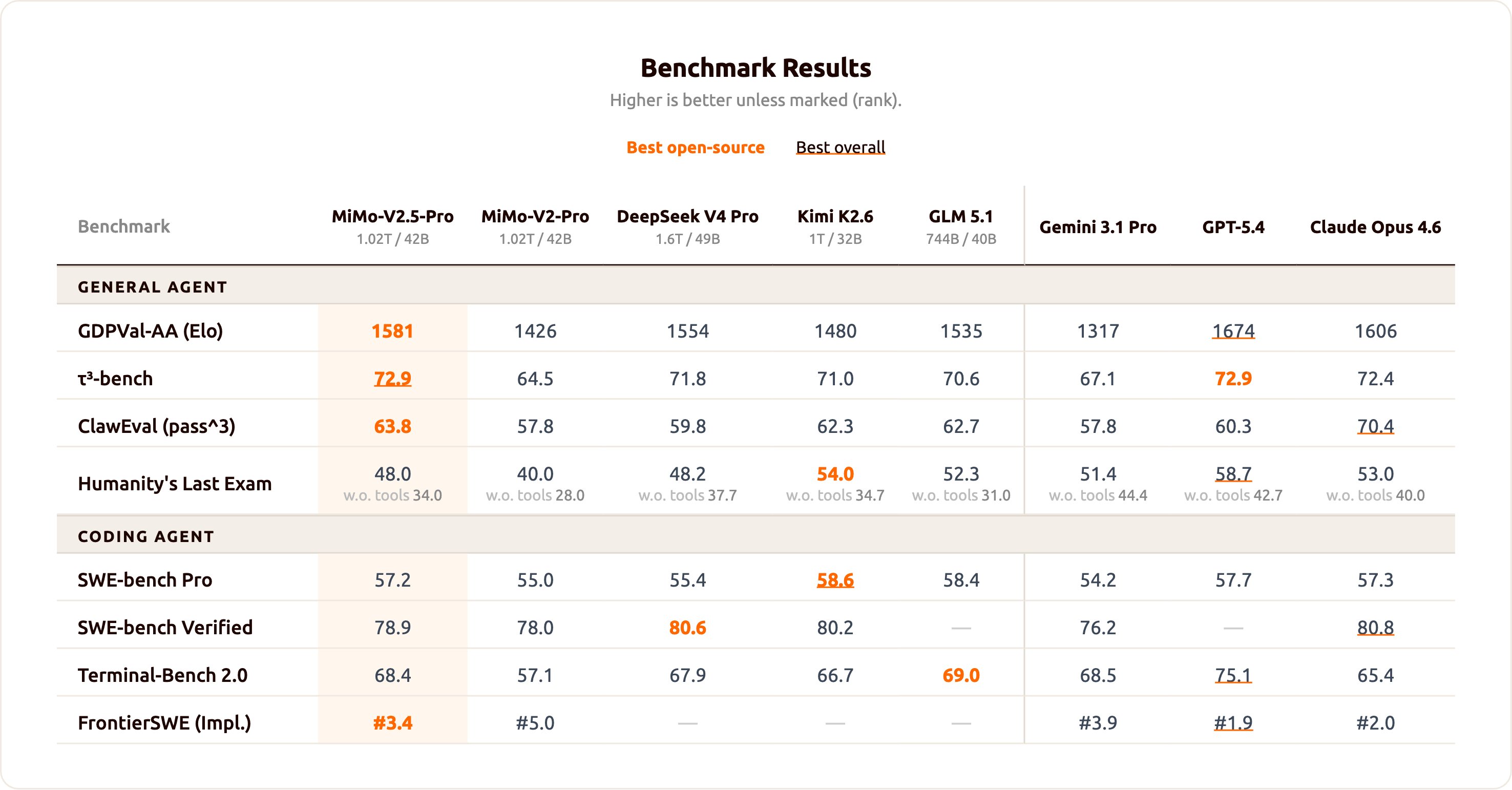

Source 1 — MiMo-V2.5-Pro release comparison (May 2026)

Source 2 — LiveBench 2026-01-08 (full leaderboard)

Methodology

Per-source normalisation. Each source is normalised 0–1 within itself: the highest reported score becomes 1.0, the lowest becomes 0.0, intermediate values are linear interpolations. For MiMo data this is done per benchmark column then averaged across the 8 columns to produce a MiMo aggregate per model. For LiveBench the Global Average (already an aggregate of 7 category averages) is normalised directly across 113 models.

Element-wise combination. Where the same model appears in both sources (matched by exact name OR clear variant alias — e.g. Kimi K2.6 → Kimi K2.6 Thinking, GPT-5.4 → GPT-5.4 Thinking xHigh Effort), the two normalised aggregates are averaged. Otherwise the single source aggregate is used.

Matched pairs (7). DeepSeek V4 Pro, GLM 5.1, MiMo-V2-Pro, Kimi K2.6, Gemini 3.1 Pro, Claude Opus 4.6, GPT-5.4. The remaining 1 MiMo-image model (MiMo-V2.5-Pro) has no LiveBench equivalent yet.

Caveats. The peer-group size differs (8 vs 113), so a 1.0 in LiveBench is competing against 113 peers while a 1.0 in MiMo was against 7. Models present only in MiMo can therefore look inflated relative to LB-only models — interpret the combined ranking as a coarse summary, not a precision instrument. Variant-name matching is also a judgement call; aggressive matching (e.g. GPT-5.4 ↔ GPT-5.4 Thinking xHigh) merges high-scoring LiveBench entries into MiMo positions and can shift the top-of-table substantially.